The "Token Tax" of the Human Web

The modern web was built for human eyes. It is heavy, complex, and full of design logic.

But as internet traffic shifts from human browsers to autonomous AI agents, a massive inefficiency has emerged. When you try to feed a standard webpage to a Large Language Model (LLM), you aren’t just sending the text you care about; you are sending thousands of lines of nested <div> elements, inline CSS, tracking scripts, and SVGs.

This bloat creates what I call the Token Tax.

A single blog post can easily consume 15,000 tokens of your context window simply because of the HTML scaffolding. This degrades the model’s reasoning capabilities, increases latency, and drastically inflates your API costs.

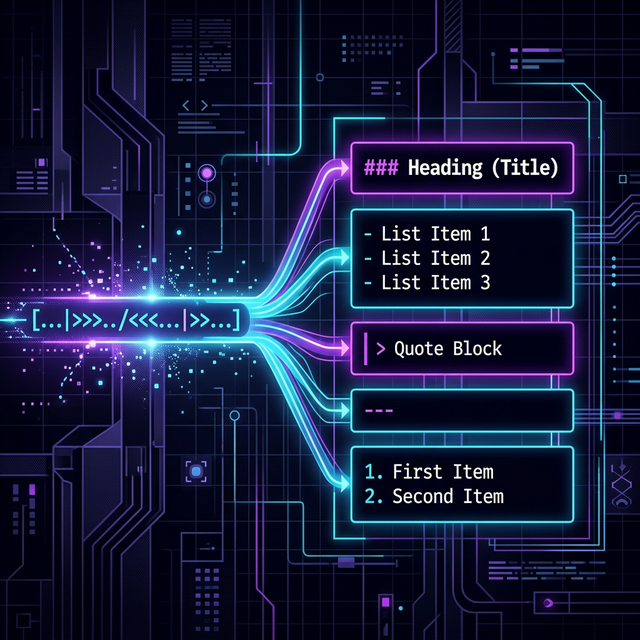

LLMs do not want HTML. They require Markdown.

Markdown is the native language—the lingua franca—of the AI era. It is clean, structurally semantic, and token-efficient. It explicitly identifies headers, code blocks, and tables without the noise.

Zero Friction: The Inline Proxy

To bridge the gap between the human web and the agent web, the extraction process must be completely frictionless.

That is why I built html2md. It transforms any page on the internet into AI-native Markdown requiring zero installation. I made it extremely simple—just prepend my public conversion proxy to any URL in your browser or curl request:

https://2md.traylinx.com/https://example.com

In milliseconds, the engine navigates to the target, bypasses WAF protections using stealth techniques, executes the necessary JavaScript to render SPAs, strips out the noise, and returns pure, unadulterated Markdown.

No scraping scripts. No complex parsers. Just the raw data your agent needs.

The Agentify & File2MD Pipelines

For complex data engineering and agent workflows, single URLs are rarely enough. That’s why I built html2md with a full suite of tools designed to build entire RAG (Retrieval-Augmented Generation) databases:

The Extraction Arsenal

- Deep Crawling: Point the system at a root domain, set a depth, and watch it spider the entire site, returning a cleanly structured `.zip` of Markdown files.

- File2MD: The internet isn't just websites. You can upload PDFs, images, or even YouTube videos (MP4, MP3) directly to the Web UI. The engine uses Vision models and Whisper transcriptions to convert multi-modal media directly into text.

- Agent Auto-Discovery: The API exposes

/llms.txtand/llms-full.txtendpoints, allowing standard AI agents to self-discover and utilize the extraction tools dynamically.

Scaling AI Data Infrastructure

High-quality data is the primary advantage for any AI application. html2md ensures that your agents and RAG systems are fed the cleanest, most token-efficient data possible, reducing the “Token Tax” drastically.

Try it out, integrate it into your agents, and let me know what you build.

Sebastian Schkudlara

Sebastian Schkudlara