If you’ve ever tried to feed a website into an LLM, you know the pain. The web is messy. Cookie banners, JavaScript-heavy SPAs, Cloudflare walls, deeply nested DOM trees — and all you wanted was the actual text.

That’s exactly why I built html2md. It takes any URL — whether it’s a blog post, a React app, or an entire documentation site — and converts it into clean, high-fidelity Markdown. No boilerplate, no noise.

The One-Liner Trick

Here’s the part I love the most. You don’t need to install anything. You don’t even need a terminal.

Just prepend https://2md.traylinx.com/ to any URL:

https://2md.traylinx.com/https://example.com

That’s it. You get back clean Markdown, ready to paste into a prompt, a docs repo, or wherever you need it.

Under the hood, Puppeteer renders the full page (yes, even React and Next.js apps), stealth plugins handle Cloudflare and WAFs, and a hybrid Readability + Trafilatura pipeline extracts just the content that matters. No scripts, no nav bars, no cookie consent modals.

Made for AI Agents

But here’s where it gets really interesting. Modern AI coding assistants — Cursor, Windsurf, Claude Code — need structured context, not raw HTML soup.

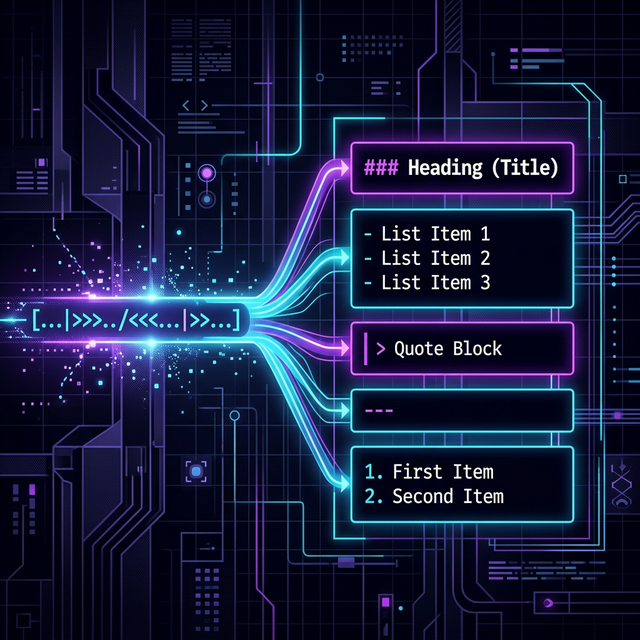

So I built the Agentify Pipeline. Point it at any docs site, and it will:

- Crawl the entire domain (you control the depth)

- Generate a

SKILL.mdmanifest — a routing index for agents - Create an

llms.txtfile (following the llms-txt.org standard) - Strip out all UI noise automatically

The result? A ready-to-use “Skill Bundle” your coding assistant can consume instantly.

Not Just Web Pages

I didn’t stop at HTML. With the File2MD mode, you can throw PDFs, images, YouTube links, MP4s, and audio files at it too:

- Vision models parse dense PDFs and describe images

- Whisper transcription pulls spoken content out of videos and audio

Give It a Spin

Whether you’re a CLI person, an API person, or a “just give me a web UI” person — html2md has you covered. Interactive terminal, REST API with real-time NDJSON streaming, or a clean SPA frontend. Pick your flavor.

It’s built for scale and speed.

Sebastian Schkudlara

Sebastian Schkudlara

Stop Sloppypasta: Why LLMs Belong in Streaming Pipelines, Not Just Your IDE

Stop Sloppypasta: Why LLMs Belong in Streaming Pipelines, Not Just Your IDE