Over the past year, the AI engineering world rallied around a noble goal: standardizing how Large Language Models (LLMs) interact with external systems. The result was the Model Context Protocol (MCP)—a powerful specification that sparked a gold rush of developers building “MCP Servers.”

But in our rush to give autonomous agents tools, we made a massive architectural mistake. We fell back on the muscle memory of the microservices era.

We reinvented the monolith.

When you build an MCP server to give your agent access to Google Drive, GitHub, or a PostgreSQL database, you are building a persistent, stateful daemon. You are wrapping simple APIs in heavy Python or Node.js servers, managing JSON-RPC event loops over stdio, and defining rigid, static JSON schemas.

We applied “API thinking” to a “Cognitive” entity. We are treating AI agents like dumb frontend web clients waiting for a JSON payload. But agents aren’t dumb clients; they are reasoning engines and native text-processors.

There is a vastly more elegant, lightweight, and extensible solution hiding in plain sight. I call it The Unix Agent Paradigm (powered by the CLI-Skill Architecture), and it is rooted deeply in a 50-year-old computer science philosophy.

Dumb Tools, Smart Agents

Think about how traditional Unix works. Utilities like grep, cat, and ls are simple, stateless executables. They don’t run as background daemons. They don’t have JSON-RPC endpoints. They take text in, and they spit text out.

“Write programs to handle text streams, because that is a universal interface.” — Doug McIlroy (1978).

Large Language Models are the ultimate universal text processors. Yet, instead of letting them process text, we are forcing them to communicate via rigid API wrappers.

The CLI-Skill architecture strips agent tooling down to the bare metal. Demonstrated by tools like the gws (Google Workspace) binary, this approach requires exactly two things:

- The CLI (The Brawn): A standard, stateless, self-contained executable (Go, Rust, Python, Bash) invoked via the standard shell. It does one thing predictably.

- The SKILL.md (The Brain): A simple Markdown file sitting next to the binary. It acts as a programmatic “man-page” for the AI, explaining the CLI’s commands, flags, expected outputs, and exact instructions on how to recover from standard errors.

Instead of registering an MCP tool and maintaining a daemon, you simply drop the executable in your PATH and the SKILL.md into the agent’s context.

Why CLI-Skills Destroy the Daemon Pattern

Here is why the decentralized CLI approach inevitably wins out over heavy MCP servers.

1. Markdown is the Ultimate Schema

MCP forces you to write strict JSON Schemas so the LLM knows how to call your tool. But LLMs are natively optimized for natural language. A SKILL.md file provides context, edge cases, troubleshooting steps, and strategic examples in the LLM’s native tongue. It’s metadata plus strategic coaching, consuming a fraction of the token overhead.

2. Cognitive Offloading to the Agent

With MCP, developers inevitably bloat their servers by hardcoding complex multi-step “routing logic” into the Python code. In the CLI-Skill pattern, the tools remain perfectly dumb. All the cognitive routing is offloaded to the LLM. By reading the SKILL.md, the agent figures out exactly how to chain CLI commands together using native Unix pipes (|), inventing workflows the developer never pre-programmed.

3. Secure Ephemeral Execution (The Gateway)

How do we handle authentication without a heavy server daemon to hold the keys? We use a lightweight, local polymorphic gateway like switchAILocal.

The gateway acts as a secure, keyless execution shell. The agent never touches your raw API keys. It simply writes the bash command (e.g., gws drive files list), and the Gateway injects the necessary OAuth tokens from the OS keychain at the exact millisecond of execution. It runs the sandboxed command, returns the standard output, and tears down the environment. Zero memory leaks, absolute zero-trust security.

4. “The Forge”: True Agentic Extensibility

Here is the ultimate superpower of the CLI-Skill pattern.

If an agent wants a new tool in an MCP ecosystem, a human developer has to write Python code, rebuild the server, and restart the daemon without crashing the agent’s active session.

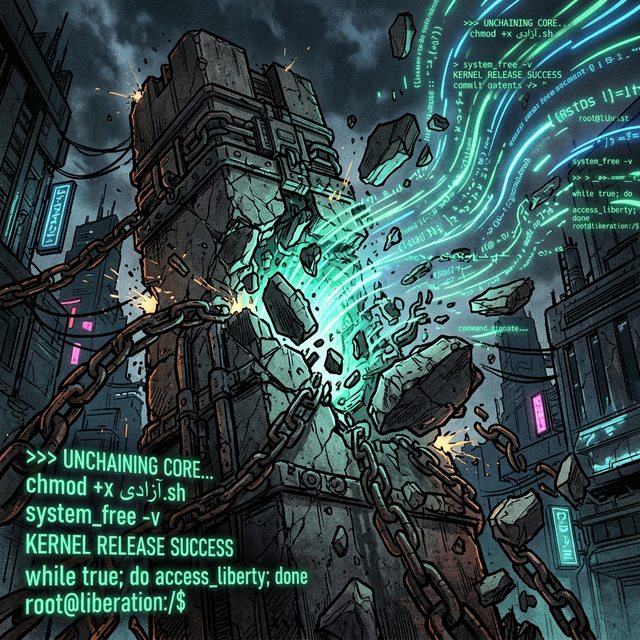

Because the CLI-Skill environment only expects executable scripts and Markdown text, an agent can literally build its own tools. It can identify a missing workflow, write a custom Python script for a niche data-parsing task, use chmod +x to make it executable, write its own SKILL.md explaining how it works, and instantly expand its own capabilities without a single service restart.

We are moving from building for AI, to teaching AI to build for itself.

The Future is Stateless

The Model Context Protocol was a crucial step in formalizing agentic interfaces, but heavy daemons are not the end-state of agentic computing.

The future of AI tooling isn’t going to be complex, persistent middleware servers. It is going to be small, stateless, pipeable CLI binaries guided by Markdown files. It’s an elegant return to the roots of computer science: stateless executables, plain-text documentation, and the Unix philosophy applied to cognitive architectures.

Build CLIs. Write Markdown. Kill the Daemon.

(Want to try the gateway that makes this architectural shift possible? Check out the open-source switchAILocal repository).

The Sandbox Illusion: Why Local AI Agents Need Kernel-Level Isolation

The Sandbox Illusion: Why Local AI Agents Need Kernel-Level Isolation